Container-native Monitoring

08/03/2018, by Justin Cook

A JUSTIFICATION FOR DISTRIBUTED TRACING AND LOG AGGREGATION INTEGRATED WITH CONTAINER-NATIVE MONITORING

“We replaced our monolith with microservices so that every outage could be more like a murder mystery.”

Like most infrastructure components, monitoring and reporting have shown little progress in the last two decades. Log files were considered adequate as most systems were static with few dependencies.

In the last decade, applications have grown largely distributed. And, coupled with microservice architecture which emerged circa 2012, visibility is decreased with each service added. Moreover, generating an accurate story from each service’s log files is a lengthy and error-prone process.

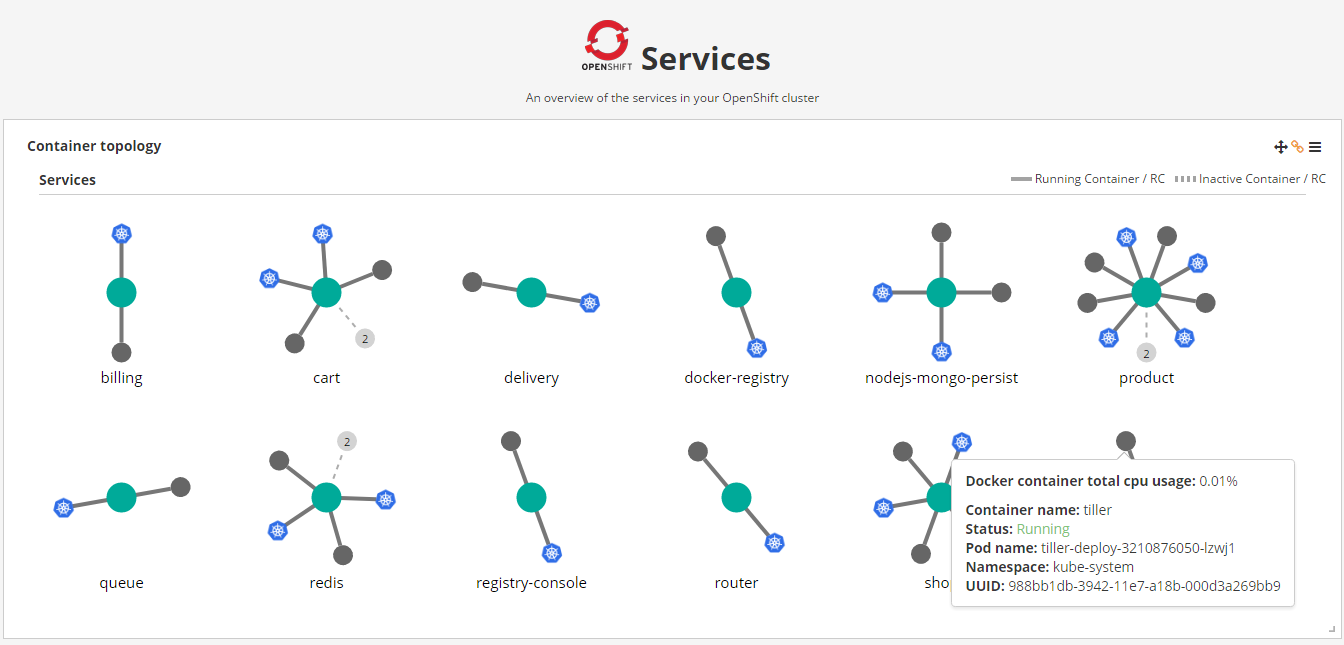

A truly decomposed suite of applications composed by a large collection of orchestrated microservices requires automated monitoring systems. Fortunately, this problem was recognised early on and addressed by large-scale users of microservices.

Technical personnel can diagnose and triage degraded performance and outages provided they have an accurate view of the platform’s state, including ingress traffic that triggers a sequence of events. These events can, and most likely will, execute services on multiple nodes: distributed tracing was born.

Log files are still significant. After all, viewing a stack trace or an application error can quickly lead to a diagnosis. But how does an automated monitoring system aggregate and inspect logs from distributed processes?

Container-native monitoring technology is emerging. While various products cover distributed tracing and log aggregation, integration with container-native monitoring of a multi-tenant distributed system of orchestrated containers is a mouthful still open for realising.

This gap exposes large-scale digital transformation to significant outages and security issues. The prolonged investigation will end in a tarnished image; loss of profit. Fail.

References

Google – Dapper

Netflix – A Microscope on Microservices

CNCF – Jaeger

FreshTracks – Distributed Tracing and its place in the monitoring landscape

Facebook Comments